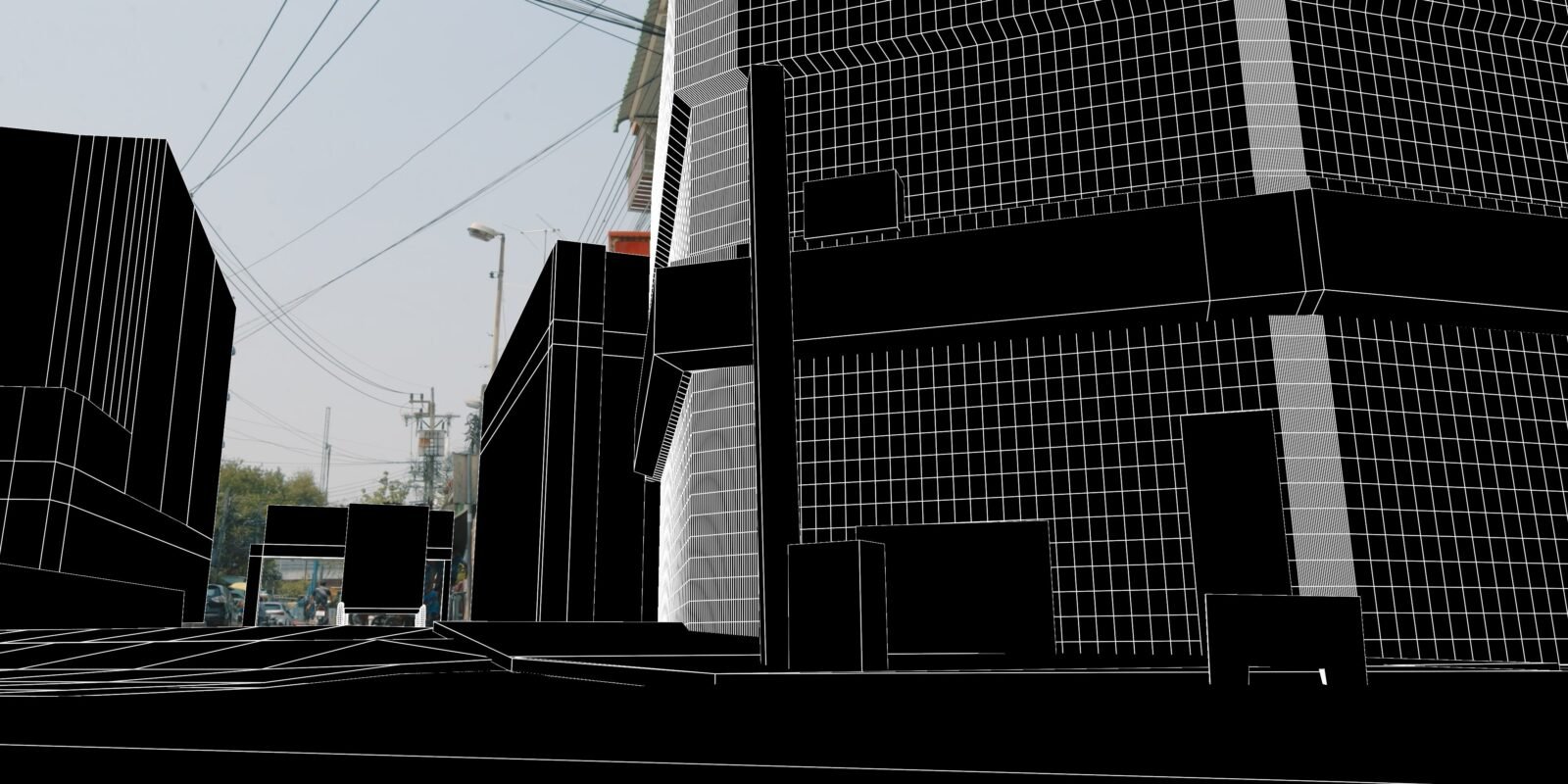

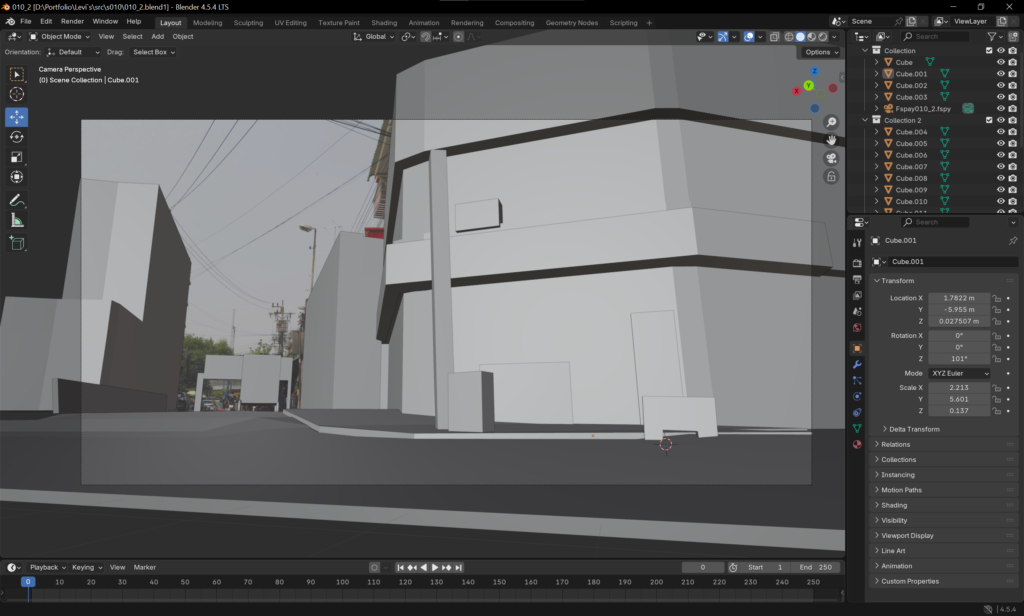

Scene Geometry — Environment Reconstruction Workflow

Once cameras were solved, we rebuilt key environment geometry for realistic lighting and shadow interaction.

This included streets, walls, props, and ground planes — essentially everything the robot would physically interact with.

For this project, 90% of the geometry was created in Blender, as the majority of shots required only basic structural accuracy without heavy modeling detail.

Blender provided a fast, flexible way to block out volumes, check parallax alignment, and prepare geometry for lighting and compositing.

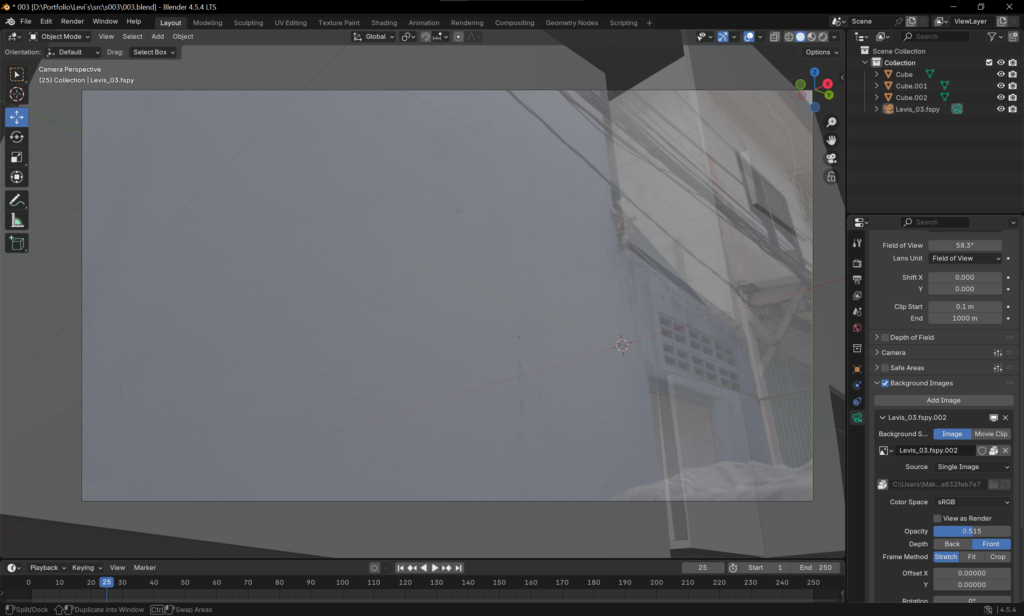

In cases where more precise or localized reconstruction was needed — for example, curved surfaces, small props, or perspective-sensitive areas — we used ModelBuilder directly in Nuke.

This allowed us to quickly model and project geometry straight from the original plate, maintaining perfect spatial alignment without leaving the compositing environment.

Main Goals

- Create accurate shadow catchers and contact surfaces for robot integration.

- Enable realistic GI and reflection accuracy during rendering.

- Provide collision references for animation and simulation passes.

- Ensure geometric continuity between Blender layouts and Nuke projections.

Geometry proxies not only helped with lighting — they kept the robot’s physical logic grounded in real-world scale and perspective.